About Collector

The Visym Labs team is working to fundamentally rethink computer vision dataset collection to be privacy preserving by design. The traditional approach to vision dataset construction is to (i) set up cameras (or scrape the web) to get raw videos and imagery, (ii) send to an annotation team to provide ground truth labels in the form of bounding boxes/masks, categories or clip times for activities, then (iii) send the annotations to a verification team to enforce quality. This approach is slow, expensive, biased, non-scalable and almost universally does not get consent from each subject with a visible face. The Visym team believes there is a better way. We construct visual datasets by enabling thousands of collectors worldwide to submit videos using a new mobile app. This mobile app allows collectors to record a video while annotating, which creates labeled videos in real-time, containing only people who have explicitly consented to be included. Collector has been used to curate millions of videos, and we believe this can help with the constant demand for more data.

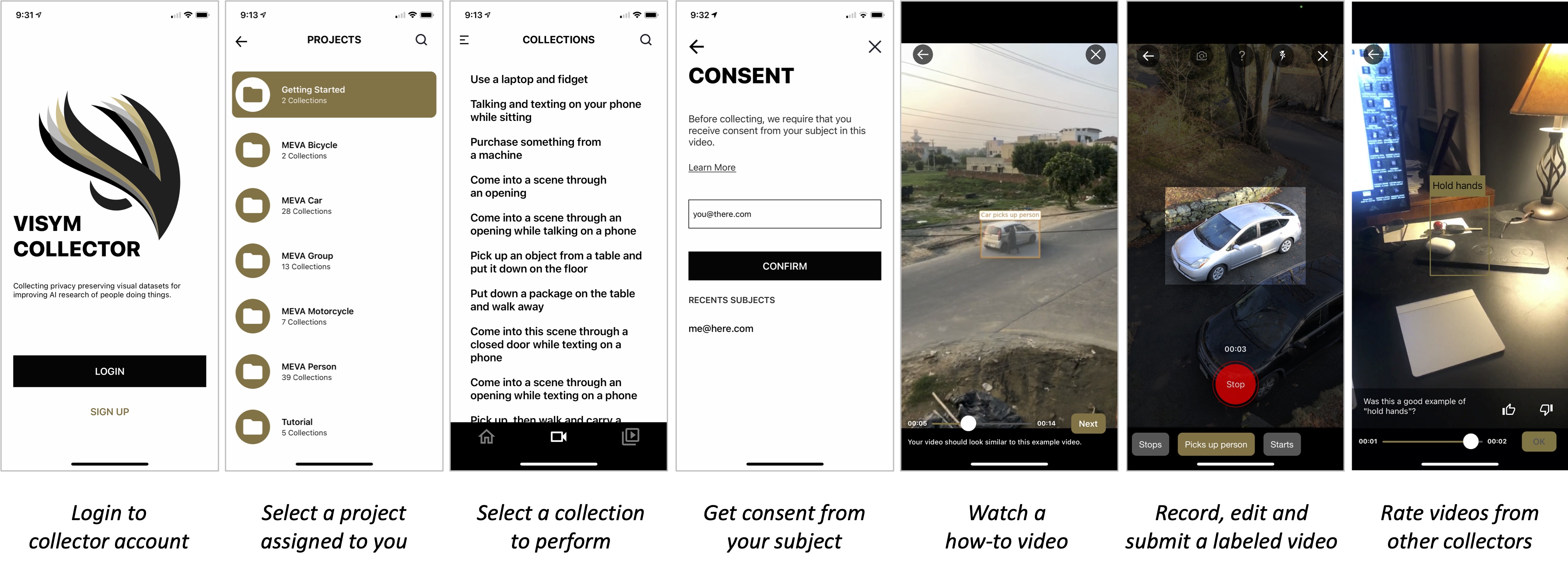

How does it work?

Visym Collector operates as follows. Collectors are invited onto the platform, and they download our mobile app to their device. Collectors select a project grouped by the required objects (e.g. a car, a motorcycle), then the collector consents their subject. The consent procedure includes a video consent to confirm that the subject in the video is the consented subject. Next, the collector watches an example video, then they instruct their subject perform the activity in the same way. The collector records and annotates the video live using gestures on their device touchscreen, then they correct any labeling errors using an in-app annotation editor and submit to the collector team for review. Annotations include bounding boxes around objects, object labels and start and end times for each activity in the collection, all collected while the video is being recorded. Finally, each collector is required to watch videos from other collectors and vote for good examples to achieve distributed consensus for collection quality. In other words, Collectors "see one, do one, and teach one" in our data collection platform.

Why Collector?

Visym collector enables collecting rare and custom activities at large scale, while maintaining high quality of the labeled dataset. We provide video recording, annotation, stabilization and verification in a single platform to curate high quality consented datasets for training visual AI. Traditional dataset curation requires gathering a set of actors, receiving consent following an IRB protocol, collecting videos, then sending the videos to a labeling team, then sending the labels to a verification team for quality control. This is an expensive and time consuming process. Collector combines consenting, recording and annotation into a single step, and provides distributed verification to provide dataset curation at an order of magnitude lower cost than existing methods. In the video below, every person has consented to their personally identifiable information to be included in our dataset, and all videos in the montage were submitted from collectors in over fifty countries in about ten minutes. We believe that Collector is a powerful new tool for ethical dataset collection for computer vision.

Who should use Collector?

Collector was designed as a platform for consented and on-demand data collection. Organizations that perform human subjects research and require large visual datasets of people for training computer vision systems can work with Visym Labs to define a custom data collection campaign. We will show you that the curation costs using Visym Collector are an order of magnitude lower than existing methods. We will work with you and your Institutional Review Board (IRB) to customize our platform for your needs. We will also provide you access to our distributed collector team in five continents and over fifty countries to implement your collection campaign. Please contact info@visym.com for more details on working with us.